NEWS

-

2026-04-28

Our paper "Mind Your Steps: A General Learning Framework for Accurate Humanoid Foothold Tracking" has been accepted in Robotics: Science and Systems (RSS) 2026!

-

2026-03-01

I joined the Shanghai Research Institute for Intelligent Autonomous Systems (SRIAS) as an Associate Professor!

-

Our workshop LeaPRiDE has been successfully organized at IROS 2025!

-

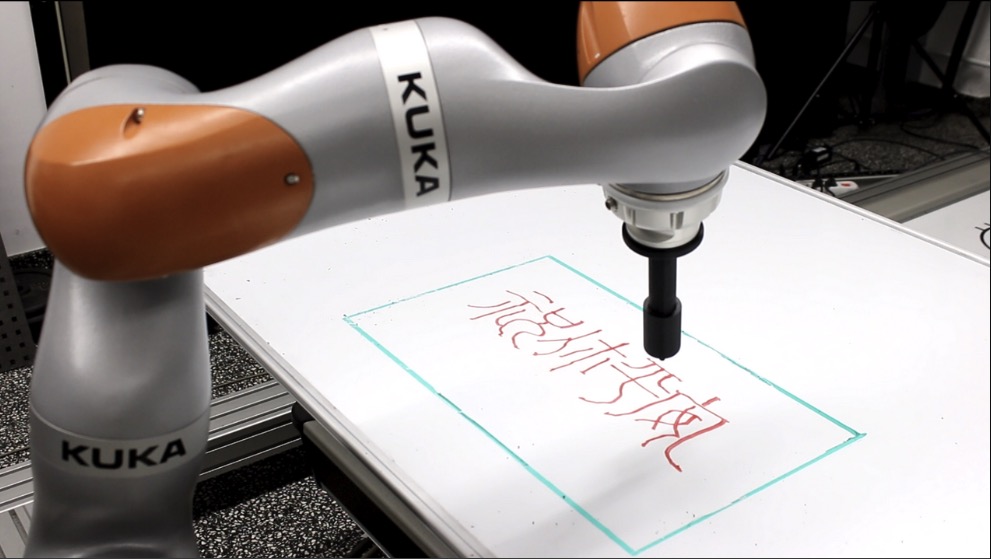

Our Paper: "Morphologically Symmetric Reinforcement Learning for Ambidextrous Bimanual Manipulation" has been accepted in CoRL 2025!

-

Our Workshop Proposal: "LeaPRiDE: Learning, Planning, and Reasoning in Dynamic Environments" has been accepted in IROS 2025!

-

Our Paper: "Maximum Total Correlation Reinforcement Learning" has been accepted in ICML 2025!

-

2025-04-21

I have been selected as member of "R:SS Pioneers 2025"!

-

Our Paper: "Distilling Contact Planning for Fast Trajectory Optimization in Robot Air Hockey" has been accepted in RSS 2025!

-

Our Paper: "Safe Reinforcement Learning on the Constraint Manifold: Theory and Applications" has been accepted in T-RO.

-

Our Paper: "Adaptive Control based Friction Estimation for Tracking Control of Robot Manipulators" has been accepted in RA-L!

-

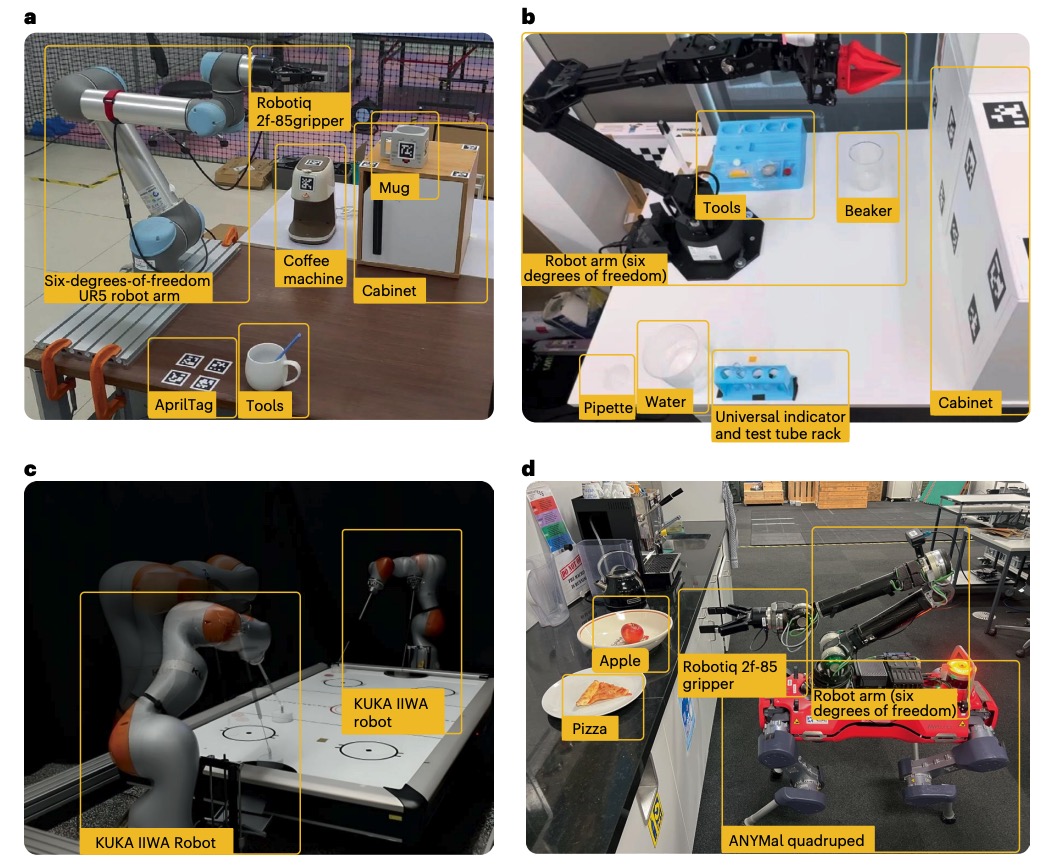

1 paper accepted in the NeurIPS Datasets and Benchmarks Track:

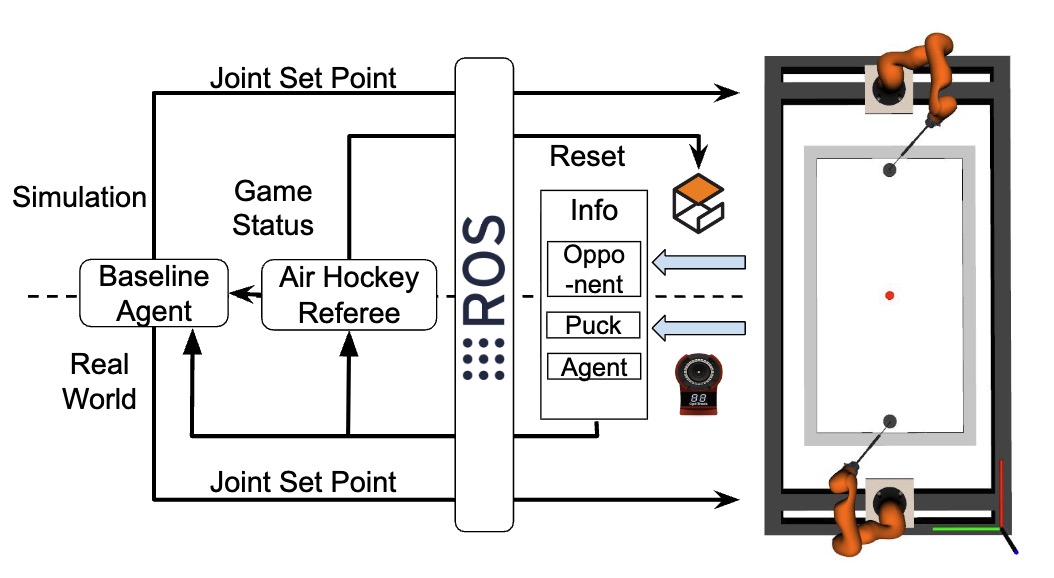

"A Retrospective on the Robot Air Hockey Challenge: Benchmarking Robust, Reliable, and Safe Learning Techniques for Real-world Robotics" -

We got 2 papers accepted in CoRL:

"Bridging the Gap Between Learning-to-Plan, Motion Primitives and Safe Reinforcement Learning"

"Handling Long-Term Safety and Uncertainty in Safe Reinforcement Learning" -

Our paper "ROSCOM: Robust Safe Reinforcement Learning on Stochastic Constraint Manifolds" has been accepted in the IEEE Transactions on Automation Science and Engineering (TASE).

-

We have organized a workshop in the NeurIPS 2023 attached to "The Robot Air Hockey Challenge: Robust, Reliable, and Safe Learning Techniques for Real-world Robotics"!

-

Our paper "Fast Kinodynamic Planning on the Constraint Manifold with Deep Neural Networks" has been accepted for publication in the IEEE Transactions on Robotics (T-RO).

-

Our competition "The Robot Air Hockey Challenge: Robust, Reliable, and Safe Learning Techniques for Real-world Robotics" has been accepted at the Neural Information Processing Systems (NeurIPS) 2023!

-

Our paper "Composable Energy Policies for Reactive Motion Generation and Reinforcement Learning" has been accepted for publication in the International Journal of Robotics Research (IJRR)!

-

Our paper "Safe Reinforcement Learning of Dynamic High-Dimensional Robotic Tasks: Navigation, Manipulation, Interaction" has benn accepted at ICRA 2023!

-

2022-09-27

I won the "IROS Student Travel Award"!

-

Our paper "Regularized Deep Signed Distance Fields for Reactive Motion Generation" has benn accepted at IROS 2022!

-

2022-01-18

Our paper "Dimensionality Reduction and Prioritized Exploration for Policy Search" is accepted at AISTATS 2022!

-

Our paper "Robot Reinforcement Learning on the Constraint Manifold" has been accepted at CoRL 2021 as oral presentation and selected as "Best Paper Award Finalist"!

-

2021-09-30

Our paper "Efficient and Reactive Planning for High Speed Robot Air Hockey" has been accepted at IROS 2021 and selected as "Best Entertainment and Amusement Paper Award Finalist"!